You have a finished track and you want a video for it. Open one browser tab and you find VidMuse, which promises to turn any Suno link or MP3 into a visual in minutes. Open another and you find Atlabs, which takes the same audio and runs it through a four-step workflow that reads the tempo, mood, and genre before generating anything. Both tools claim to do the same thing. They do not. This comparison breaks down exactly what each tool does, how their interfaces actually work, and which one is right depending on what you need out of a music video.

Why Creators Are Evaluating Both Tools

Independent artists, producers, and content creators are increasingly looking for a tool that does more than generate a clip. The problem with most AI video tools is that music is an afterthought. You generate a visual, attach audio, and call it a music video. What creators actually want is a tool where the music drives the visual output, not one where it gets layered on after the fact. VidMuse and Atlabs both position themselves around music-first video generation, which is why they keep appearing in the same searches. But the underlying approach, the level of control available, and the ceiling of what each tool can produce are meaningfully different.

The practical questions creators run into: How much can you steer the creative direction of the video? Does the tool actually respond to what your music sounds like, or does it just take a style prompt and generate something adjacent? What does the free tier actually produce? And what does it cost to get commercial-quality output without a watermark?

Quick Comparison

Feature | Atlabs AI | VidMuse | Winner |

Music input | Upload MP3 or WAV (up to 200 MB) | Paste Suno link or upload MP3 | Tie |

Audio analysis | Auto-detects Genre, BPM, Mood, Language | No audio analysis — style set via text prompt | Atlabs |

Creative direction | 6 AI narrative concepts from your audio | 4 preset video types (Story, Abstract, Performance, Viral) | Atlabs |

Visual styles | 20+ named styles (Cyberpunk Anime, Noir...) | Model-dependent — Kling, Veo, Midjourney etc. | Atlabs |

Aspect ratios | 16:9, 9:16, 1:1 | 16:9 or 9:16 only | Atlabs |

Free tier output | Depends on credit plan | 720p with watermark, no commercial use | Atlabs |

1080p access | Included | Pro plan only ($33/mo) | Atlabs |

Watermark removal | Included | Pro plan only | Atlabs |

Commercial use | Yes | Pro plan only | Atlabs |

Video length | Full-length tracks supported | 30-60s recommended | Atlabs |

Post-production | Motion Control, Lip Sync, Reframe, Upscale | Not available | Atlabs |

Model choice | Atlabs proprietary pipeline | Kling, Veo 3.1, Sora 2, Midjourney V7+ | VidMuse |

Entry price (paid) | Own credit system | $33/month (Pro) | Tie |

Atlabs AI: A Complete Music Video Studio

Open app.atlabs.ai/new-music and you see a four-step progress bar at the top of the screen: Add Music, Set Style, Direction, Cast. That structure is not cosmetic. Each step feeds directly into the next, and the entire visual output of the video is shaped by decisions made at each stage. This is what separates Atlabs from every other tool in this comparison: it builds the video from the inside out, starting with what your music actually sounds like.

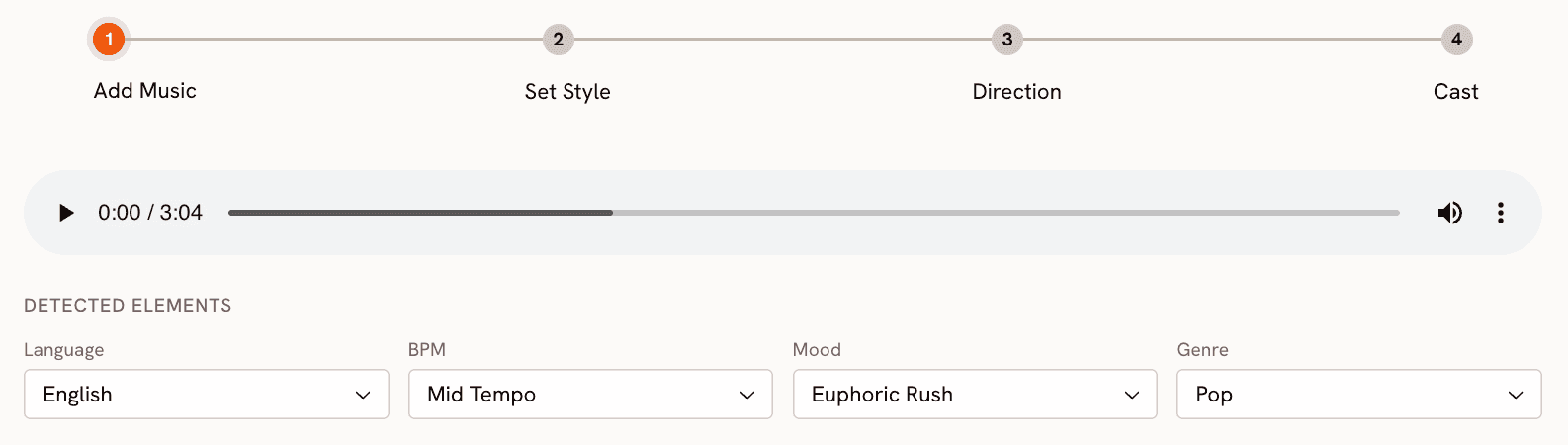

Step 1: Add Music — Atlabs Reads the Track, Not Just the Title

Upload your MP3 or WAV file (up to 200 MB) onto the upload zone. The moment your file processes, Atlabs runs audio analysis on it and auto-detects four attributes: Genre, BPM, Mood, and Language. For a dark trap track, you might see Genre: Hip Hop, BPM: Fast Tempo, Mood: Aggressive. For a melodic pop ballad, Mood might come back as Romantic or Uplifting with a Mid Tempo BPM reading. Every field is editable. If the detection missed the feel of the track, correct it before proceeding.

This step matters because the detected values are not cosmetic labels. They are the inputs that generate the Creative Direction concepts in Step 3. A Fast Tempo, Aggressive track produces fundamentally different narrative concepts than a Slow Tempo, Nostalgic one, even if both are uploaded to the same visual style. The music is not decoration here. It is the creative brief.

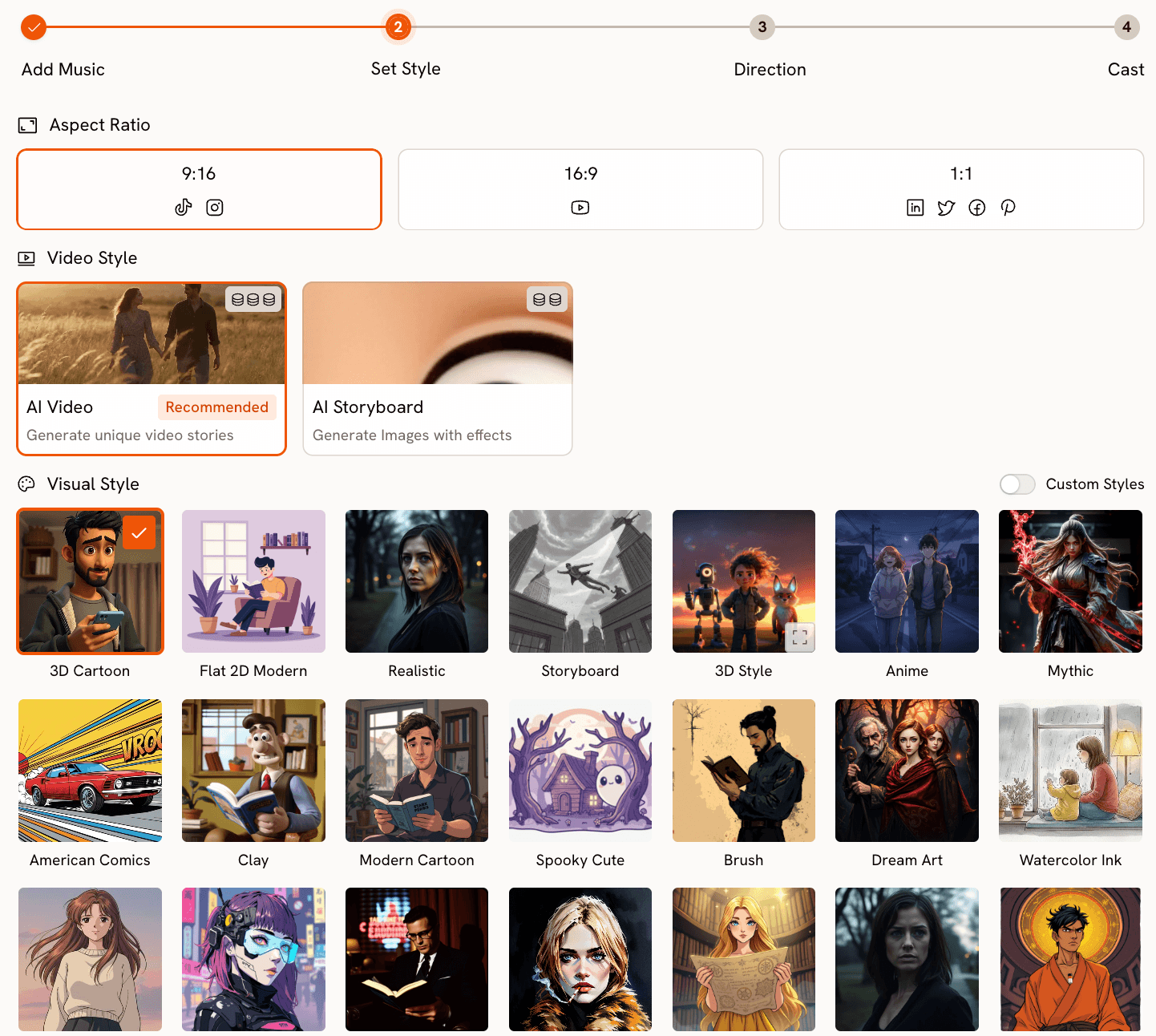

Step 2: Set Style — 20+ Named Visual Options Across Three Aspect Ratios

The Set Style step asks you to choose an aspect ratio (9:16 for TikTok and Reels, 16:9 for YouTube, 1:1 for Twitter and LinkedIn), a video type (AI Video for a full moving narrative, or AI Storyboard for a sequence of image-based frames with effects), and a visual style from a library of over twenty named options.

The visual style names tell you exactly what you are getting. Cyberpunk Anime produces neon-lit, high-contrast futuristic scenes. Cinematic produces film-grade storytelling with directional light and desaturated tones. Noir is dark, shadowed, and monochromatic. American Comics uses bold outlines and heavy contrast. Watercolor Ink produces soft, painterly frames with visible brushwork. Fantasy Horror introduces dark, surreal, and otherworldly imagery. You are not describing an aesthetic to an AI and hoping for the best. You are selecting from a defined, named library where each style has a consistent visual identity.

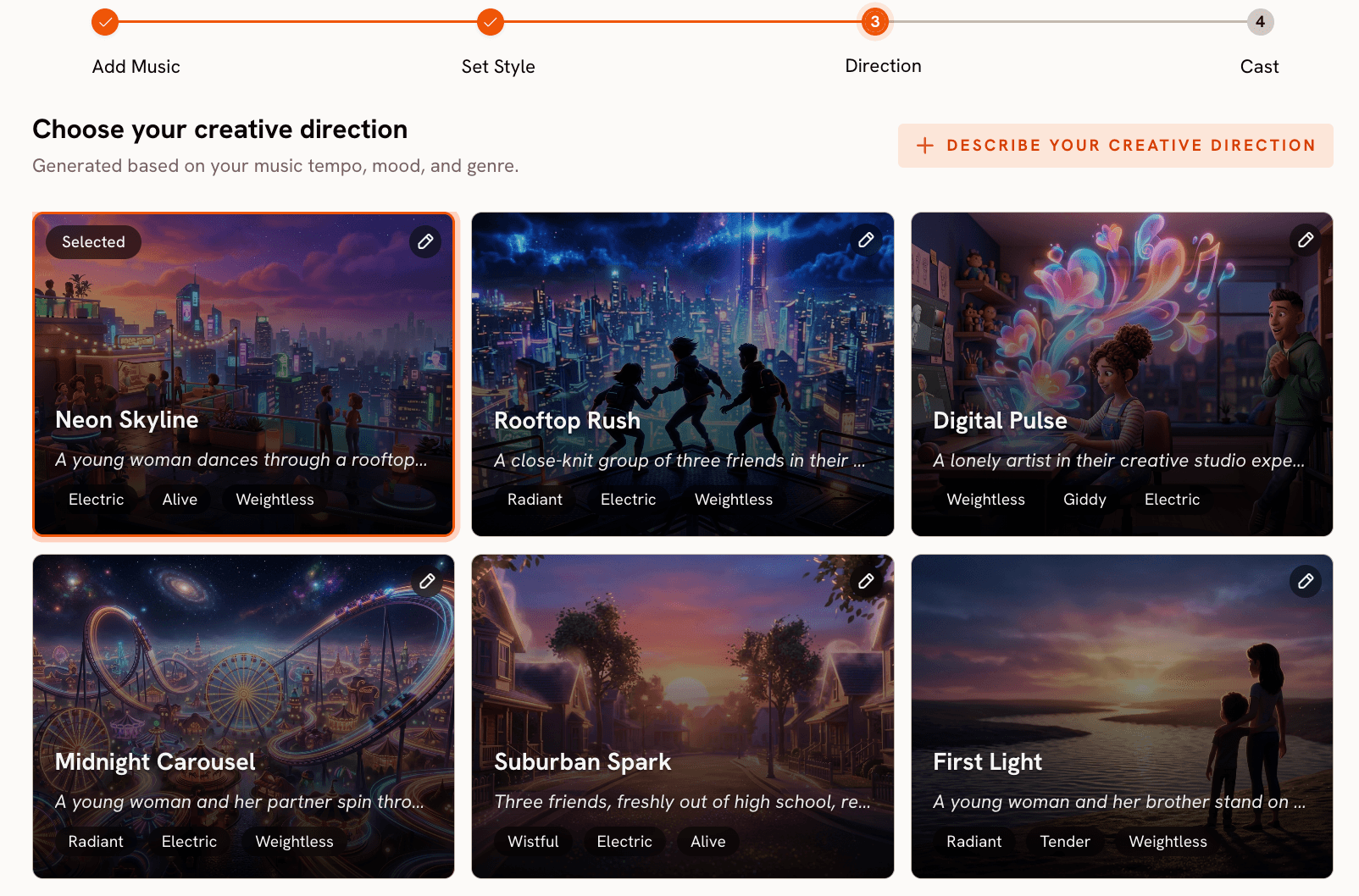

Step 3: Direction — Six Narrative Concepts Generated From Your Actual Audio

This is the step that makes Atlabs functionally different from every other tool in this category. Based on the Genre, BPM, and Mood it detected from your uploaded track, Atlabs generates six original Creative Direction concepts. Each concept has a title, a two-to-three sentence narrative description, and three emotional mood tags. These concepts are not pulled from a generic library. They are generated specifically from the audio characteristics of the track you uploaded.

A fast-tempo, Aggressive hip-hop track produces concepts built around kinetic pursuit narratives, urban tension sequences, and triumph-from-struggle arcs. A Slow Tempo, Nostalgic folk track produces concepts centred on memory, stillness, and landscape. Pick the concept that matches the emotional direction you want for the video. If none of the six land exactly right, click "Describe your Creative Direction" to write a custom concept. The form takes a title, a narrative description, optional mood tags, and an Enhance toggle that expands and refines your concept before generating.

This is the point where Atlabs earns its position. Upload your track and see the six concepts it generates, then compare what you get to typing a style prompt into a text box.

Step 4: Finalise Cast — Character Consistency Across Every Scene

The Cast step lets you name and describe the characters who appear in the video. Click to add a character, give them a name, and write a physical description. Atlabs uses these descriptions to maintain visual consistency across scenes. A character described as "a lean figure in a black hoodie, hood up, early twenties, sharp jawline, deliberate movement" will appear with those characteristics throughout the video, not as a different person in each scene. You can add multiple characters and also define objects that should appear, giving you narrative control over what the video actually contains.

Once the cast is set, click Generate. The full video, built from your audio analysis, visual style, narrative direction, and cast, renders in two to four minutes and appears in your Library.

Beyond the Music Video: Atlabs as a Full Post-Production Suite

After generating your video, Atlabs gives you tools that VidMuse does not have at all. The Motion Control tool lets you transfer movement from any reference video onto a character image. Upload a 3-to-30-second clip of a dancer or performer, upload your character image, describe the background scene, and Atlabs maps the motion onto the character. This produces a performance-style clip you can cut into your music video without needing to appear on camera yourself.

The Lip Sync tool synchronises lip movement on any character image or video clip to your audio track. Upload the image (up to 20 MB) and your audio (2 to 120 seconds), and Atlabs produces a clip where the character's lips match the vocal delivery. For artists who want a traditional performance-to-camera look without actually filming anything, this is the tool.

The Reframe tool converts your generated video to any aspect ratio (seven options including 9:21 and 21:9 for cinematic formats) with AI-generated fill that extends the scene. The Upscale tool takes your video to 4K at up to 60 frames per second. And Modify Video lets you AI-transform existing footage using a text prompt, turning a generated clip into a completely different visual by describing the change you want.

None of these tools exist in VidMuse. If your production needs extend beyond generating the initial video, Atlabs is the only option here with a full post-production pipeline built in.

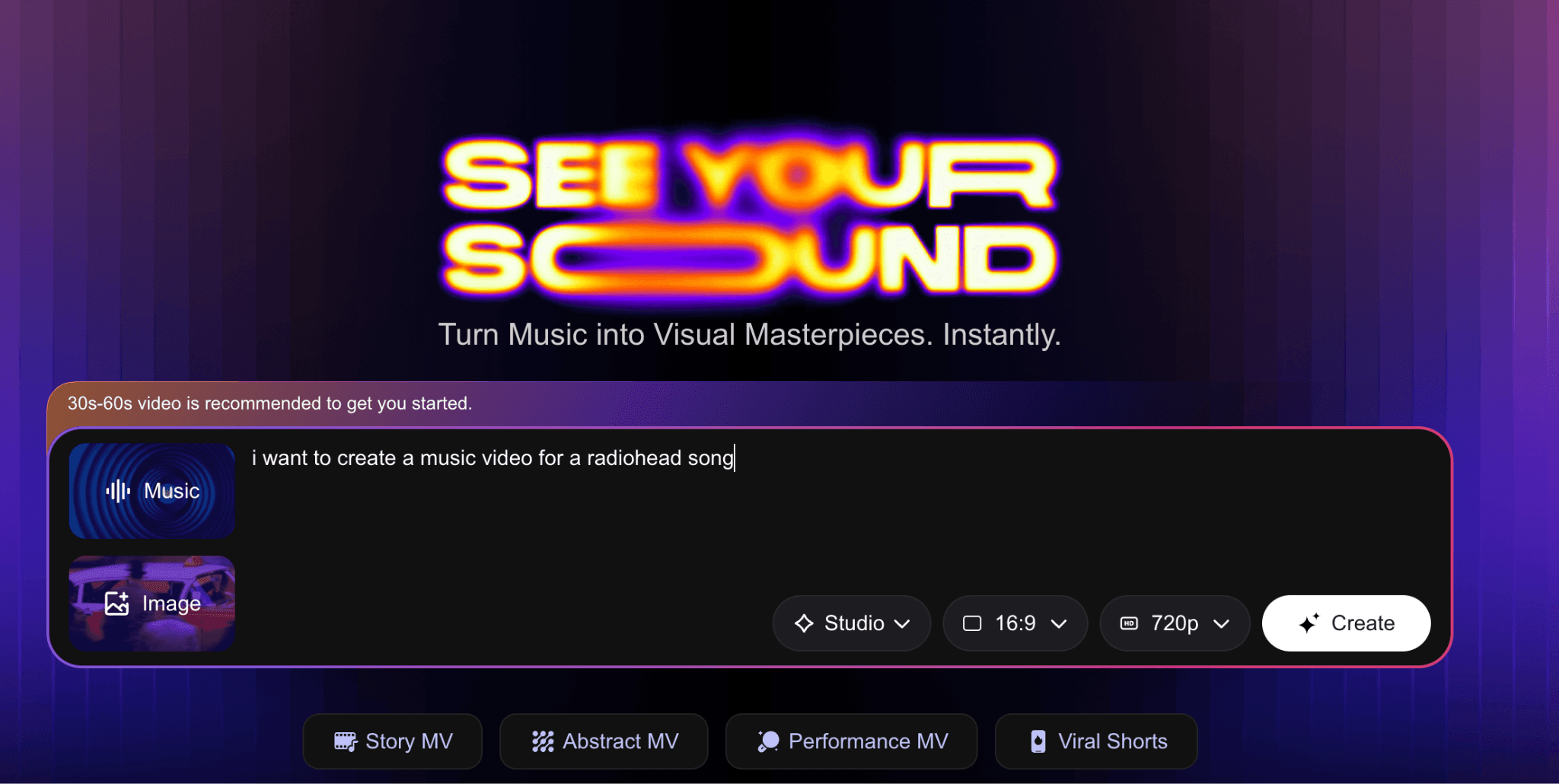

VidMuse: Fast Turnaround With Multi-Model Flexibility

VidMuse (vidmuse.ai) takes a different approach. The homepage presents a single prompt box with the placeholder "Help me create a music video for [Suno link / mp3 file] in the style of..." You paste a Suno track URL or upload an MP3, add an optional image reference, describe the style you want in natural language, and click Create. That is the entire input model. VidMuse does not analyse your audio. It reads your text description and routes the generation through whichever AI model you select.

The three quality tiers available from the Studio dropdown are: Studio (high-end visuals, described as "for official releases"), Lite (faster, cheaper, described as "for social shorts"), and Custom (Pro plan only). The aspect ratio selector offers 16:9 or 9:16, and resolution defaults to 720p with a watermark on the free plan. Upgrading to Pro unlocks 1080p and removes the watermark.

The four video type presets at the bottom of the create panel (Story MV, Abstract MV, Performance MV, Viral Shorts) give you a starting point for the type of output you want. These are not creative direction concepts derived from your music. They are style categories that shape the visual output regardless of what the track sounds like. A Story MV generated from a jazz track and a Story MV generated from a metal track will follow the same structural template. The music informs the vibe through your text description, not through any analysis VidMuse performs on the audio.

What VidMuse does well: VidMuse is significantly faster for short-form content. The 30-60 second recommended output length maps directly to Reels, TikTok, and Shorts. If you need a quick visual to post alongside a new release without spending time on creative direction, VidMuse produces a usable clip with minimal setup. It also unlocks access to a wide range of third-party models (Kling V3.0, Veo 3.1, Midjourney V7, Sora 2 Pro, ElevenLabs) on paid plans, which is useful if you want to experiment with specific model outputs. |

Where VidMuse falls short is creative specificity. Because VidMuse does not read the music, it cannot generate concepts tailored to the tempo, mood, or genre of your track. The text prompt you write is the only creative input beyond the music file itself. Two creators uploading the same track with the same style prompt will get visually similar outputs. Two creators uploading the same track to Atlabs will get six different narrative concepts shaped by that specific track's musical characteristics, and can select from or customise among them.

The free tier also significantly limits what VidMuse can deliver. The 720p watermarked output cannot be used commercially, which means any serious release requires at minimum a $33 per month Pro subscription. The 1,000 one-time free credits cover roughly one 30-second trial video.

How to Choose Between VidMuse and Atlabs

Choose VidMuse if: you need a short social clip fast, you have no interest in controlling the narrative direction of the video, and you want to experiment with outputs from specific third-party models like Midjourney V7 or Sora 2. VidMuse is a quick-turnaround tool for social-first content where the visual just needs to look good alongside the music, not tell a specific story.

Choose Atlabs if: you want the video to feel like it was made for the specific track you uploaded, not like a generic AI clip with music added. If you have a track with a specific emotional arc, a BPM that matters, and a genre identity you want the visuals to reflect, Atlabs is the tool that actually reads those things before generating anything. If you need full-length video, 1:1 format, commercial use rights, or any post-production work (Motion Control, Lip Sync, Upscale, Reframe), Atlabs is the only option between these two that supports any of it.

The practical question is whether the visual relationship between your music and your video matters to you. If the music is background and the visual just needs to look current, VidMuse handles that quickly. If the visual is supposed to be a direct response to the music, Atlabs is the right tool.

Custom Creative Directions to Try in Atlabs

These are ready-to-use custom Creative Direction prompts for Step 3 of the Atlabs workflow. Paste whichever matches your track into the "Describe your Creative Direction" field and turn on the Enhance toggle before generating.

Dark Trap / Cinematic Hip-Hop: A protagonist in a black hoodie moves through an industrial city at 3 AM. Camera tracks low behind them, revealing neon reflections in standing water. The environment is threatening but the character moves through it without fear. The narrative arc reaches its peak when they stop at a rooftop edge and the camera pulls back to reveal the full city below. Mood: Dark, Powerful, Cinematic. Visual style: Cyberpunk Anime. |

Melodic Pop / Emotional: A figure walks through the same street across four seasons. Spring light fades to winter grey. The camera always stays at the same distance, tracking from behind. Nothing dramatic happens. The narrative is entirely in the changing light and the unchanged posture of the character. Mood: Nostalgic, Tender, Bittersweet. Visual style: Watercolor Ink. |

Electronic / Euphoric: An abstract cityscape where the architecture pulses and shifts in sync with the beat. Geometric structures break apart and reassemble as the energy builds. No characters. The environment is the protagonist. Mood: Euphoric, Electric, Mysterious. Visual style: Cyberpunk Anime. Scene peaks at the drop with a full structural collapse and rebuild. |

R&B / Romantic: Two characters in a warmly lit apartment. The camera moves slowly between them, holding on small gestures: a hand on a window, a glance across the room. The narrative does not resolve. The tension between them is the video. Mood: Romantic, Warm, Restrained. Visual style: Cinematic. Golden hour light throughout. |

Motion Control add-on: After generating your music video in Atlabs, open the Motion Control tool. Upload a 5-15 second reference clip of a dancer performing movement that matches the energy of your track. Upload a character image from your generated video. In the prompt field, describe the background: "Fog-covered warehouse floor, single overhead light, mist at ground level, concrete walls." Atlabs transfers the dancer's movement onto your character and places them in the described environment. |

Lip Sync finish: Upload a close-up still or short clip of your AI character from the generated music video. Upload the vocal track from your song (2 to 120 seconds). Atlabs synchronises the character's lip movement to the audio, producing a performance-to-camera clip that cuts naturally into a full music video edit. |

Frequently Asked Questions

Can I use VidMuse with a track I made myself, not from Suno?

Yes. VidMuse accepts MP3 uploads directly alongside the Suno link option. You paste or upload your audio file, describe the style you want in the prompt box, and generate. The output is based on your text description, not on analysis of the audio file.

Does Atlabs work with tracks from any source, or only AI-generated music?

Atlabs accepts any MP3 or WAV file up to 200 MB. The source of the audio does not matter. Tracks from DAWs, purchased instrumentals, original recordings, or AI generators all work the same way. Atlabs analyses the audio file itself, so the quality of the musical input shapes the quality of the analysis.

What does VidMuse's free plan actually produce?

The VidMuse free plan gives you 1,000 one-time credits, enough for approximately one 30-second trial video. The output is capped at 720p and includes a visible watermark. Commercial use is not permitted on the free plan. To remove the watermark, access 1080p, and use output commercially, you need the Pro plan at $33 per month billed annually.

Can I make a full-length music video in Atlabs, not just a short clip?

Yes. Atlabs is built around full-length track uploads. There is no recommended maximum length the way VidMuse recommends 30-60 seconds. Upload the complete track, and Atlabs generates a video that runs for the full duration. The Creative Direction step generates narrative concepts designed to carry across a complete song structure, with scene pacing derived from the detected BPM and mood.

Is there a way to try Atlabs without committing to a plan?

Yes. atlabs.ai has a free tier with credits you can use to run your first generation. Start with the Music Video workflow, upload a track you have already made, and run through all four steps. The first generation is the most useful test because it shows you what the audio analysis produces as Direction concepts for your specific track.

Final Verdict

VidMuse and Atlabs are not competing on the same ground, despite both accepting a music file as input. VidMuse is a prompt-to-video tool with audio attached. Atlabs is an audio-first creative studio where every element of the video, the narrative, the pacing, the mood, the cast, is derived from what you uploaded. That distinction matters significantly if the relationship between your music and your video matters to you.

For independent artists releasing tracks that deserve a visual identity, not just a clip, Atlabs produces output with a coherent creative logic that matches the music. The six Direction concepts in Step 3, generated from your actual audio, are the clearest evidence of this. No other tool in this category generates those. For quick social content where you need something that looks good fast, VidMuse gets you there efficiently. For anything that needs to hold up as a real music video, Atlabs is where you start.