You've seen the AI fashion images flooding your feed. Some of them look better than a real studio shoot. But when you try to recreate that quality yourself, something breaks. The model changes between shots. The garment loses its details. The lighting that worked in image one is gone by image three. This guide cuts through the noise and tells you exactly which tool to use, and why.

The Real Problem With AI Fashion Photography

You are not a photographer. You might not have a $15,000 studio budget. But you have a collection to shoot, a campaign to run, or a lookbook to produce.

So you opened Midjourney. You got one gorgeous image. Then you tried to get a second shot of the same model in the same outfit and everything fell apart.

That is the problem nobody talks about when they show you AI fashion results.

The single image is not the hard part. The hard part is:

Building a consistent model across 20 different shots

Keeping the garment details accurate when the lighting changes

Creating a full editorial spread, not just one lucky generation

Getting something actually ready to publish without three hours in Photoshop

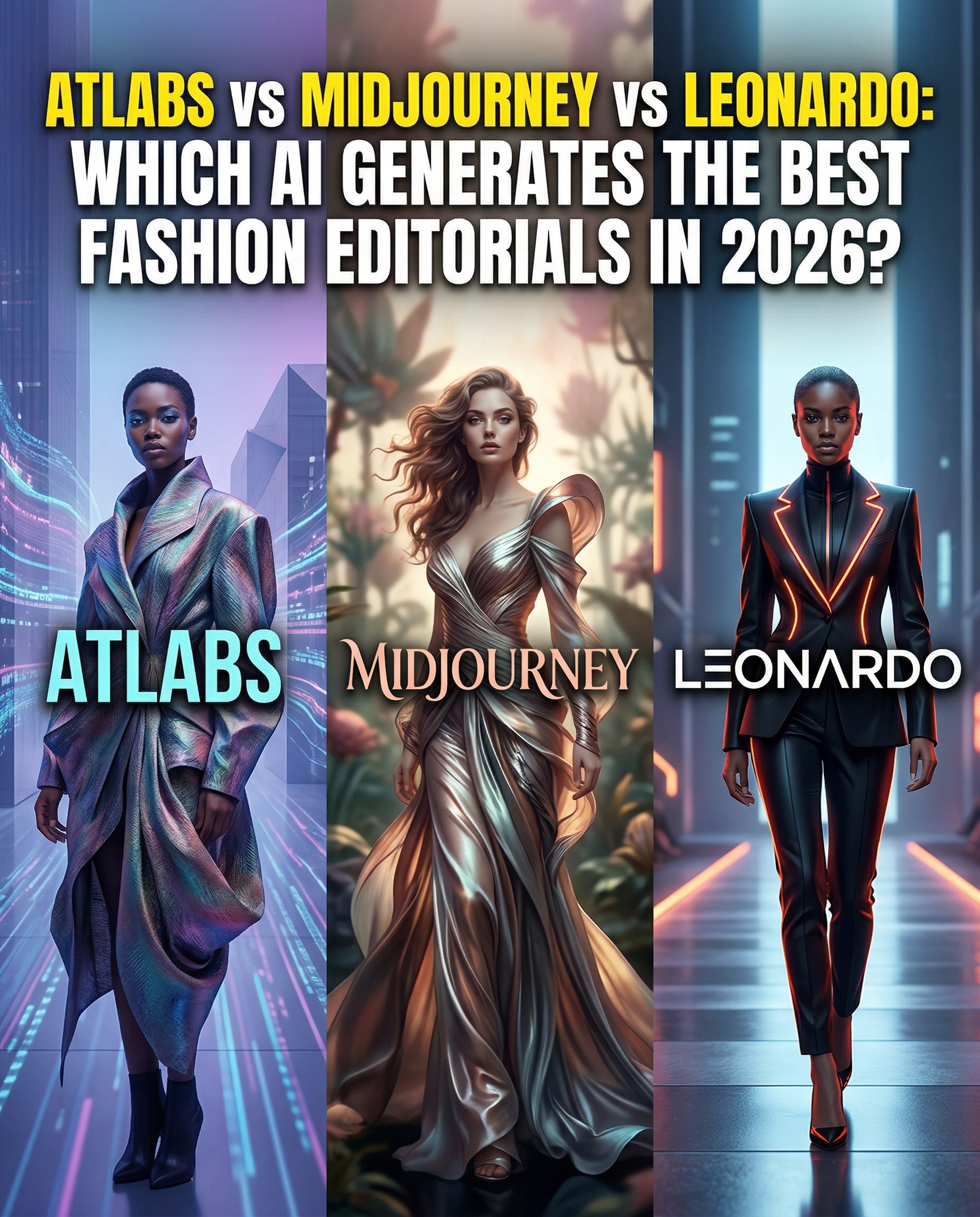

This comparison tests all three tools against the actual needs of fashion photographers, creative directors, independent designers, and social content teams producing AI fashion editorial content in 2026.

What Makes AI Fashion Editorials Actually Difficult

Fashion photography is not about one beautiful image. It is about a story told across a series of shots.

That means the tools that work for general AI image generation often fail the moment you ask them to do something fashion-specific:

The Consistency Problem

Your model has to look the same in every shot. Same face structure, same body proportions, same energy. Midjourney and Leonardo both produce beautiful single images, but getting the same model from shot to shot requires either a trained LoRA, careful seed management, or a lot of luck.

Atlabs solves this at the platform level. You define the character once and it stays consistent across every scene in the shoot.

The Garment Problem

Garments are the product. A blurred sleeve, a distorted hemline, or a fabricated texture that does not match the real garment destroys the usefulness of the image for any commercial purpose.

This is where prompt engineering becomes critical, and where the differences between tools become very visible.

The Editorial vs. Single Shot Problem

Most fashion designers and content teams do not need one image. They need a full shoot. Opening wide, medium full, close-up detail, alternative colourway, lifestyle context. Five to fifteen images that work together as a coherent campaign.

Only one tool on this list is actually built to handle that workflow.

Feature Comparison: Atlabs AI vs Midjourney v6 vs Leonardo AI

Feature | Atlabs AI | Midjourney v6 | Leonardo AI |

Best For | Full editorial campaigns from concept to finished asset | Single-shot hero image generation | Fine-tuned model training for brand consistency |

Model consistency | Built-in across scenes and shoots | Not available natively | Requires manual LoRA training |

AI video output | Yes, full motion editorial | No | No |

Prompt control for fashion | Style, garment, lighting, mood all lockable | Strong but single-shot only | Strong with trained models |

Ready-to-publish output | Yes, export direct to campaign | Image only, needs external editing | Image only, needs external editing |

Workflow for non-designers | Beginner-friendly with full depth | Requires prompt fluency | Technical setup required |

Pricing | From $15/month | From $10/month | Free tier + paid plans |

Atlabs AI: Built for the Full Editorial Shoot

If you have tried to build a fashion editorial in Midjourney and ended up with a folder full of beautiful but disconnected images, Atlabs is the answer to that problem.

Atlabs is not an image generator. It is a production platform. The difference matters enormously for fashion work.

Consistent Models Across Every Shot

You define your model once. Face structure, skin tone, body type, energy. Atlabs locks that definition and applies it across every image in the shoot.

This is not seed management. It is not hoping the random number generator cooperates. It is structural consistency built into the platform.

For a designer producing a seasonal lookbook, this means every image in the spread features the same recognisable model, creating the coherent visual identity that a real fashion shoot delivers.

Fashion-Specific Prompt Control That Actually Works

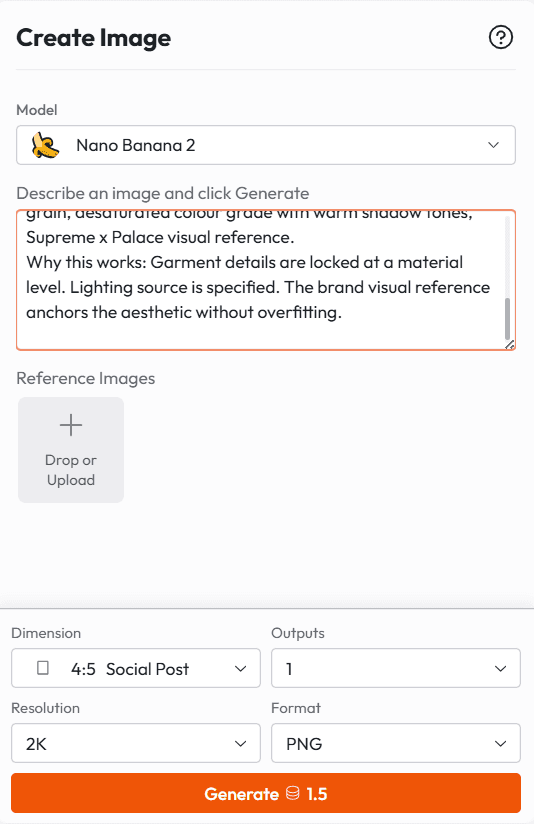

Here is an example of the kind of prompt that works inside Atlabs for fashion editorial work:

Prompt Example 1: Minimalist Luxury Editorial

Female model, late 20s, sharp angular features, cool neutral skin tone, natural brows, no visible makeup, straight dark hair pulled back cleanly. Wearing oversized structured white blazer, minimal raw hem wide-leg trousers in ivory, no accessories. Studio environment, pure white cyclorama background, hard directional light from camera left creating a single clean shadow, 85mm lens equivalent, full body framing, editorial fashion photography, high contrast, matte finish, no retouching, Raf Simons spring collection visual language.

Why this works: It locks face geometry, clothing structure, lighting direction, lens, and brand visual reference. Atlabs can hold all of this stable across multiple shots in the same session.

Prompt Example 2: Streetwear Campaign

Male model, early 20s, mixed heritage, warm medium-dark skin tone, natural textured hair, relaxed confident expression. Wearing oversized graphic tee in washed black with visible print distress, straight-leg carpenter trousers in raw denim, chunky white leather low-top sneakers. Urban environment, downtown parking structure, overcast natural light with fill bounce from concrete surfaces, wide angle lens, medium full framing, authentic documentary style, film grain, desaturated colour grade with warm shadow tones, Supreme x Palace visual reference.

Why this works: Garment details are locked at a material level. Lighting source is specified. The brand visual reference anchors the aesthetic without overfitting.

Prompt Example 3: Sustainable Fashion Lookbook

Female model, early 30s, Southern European features, warm olive skin, loose natural waves, minimal earthy makeup. Wearing linen midi dress in sage green, visible natural fabric texture, slightly relaxed silhouette, raw hem at ankle. Outdoor environment, overgrown garden setting, golden hour backlight, dappled natural shadows, 50mm lens equivalent, medium full to three-quarter framing, warm natural colour grade, organic softness, Aesop or Cos brand visual language.

Why this works: Fabric texture is explicitly described. Natural lighting conditions are specified. The brand reference anchors the mood without defaulting to generic AI softness.

From Still to Motion: The Feature Nobody Else Has

Here is where Atlabs separates entirely from both Midjourney and Leonardo.

Once your editorial images are generated, you can turn the same campaign into video. Same model. Same garment. Same lighting language. Now moving.

For fashion brands running paid social, Instagram Reels, or TikTok campaigns, this is the difference between a content team that produces stills and a content team that produces a full campaign. One shoot. Multiple outputs.

No other tool in this comparison can do that.

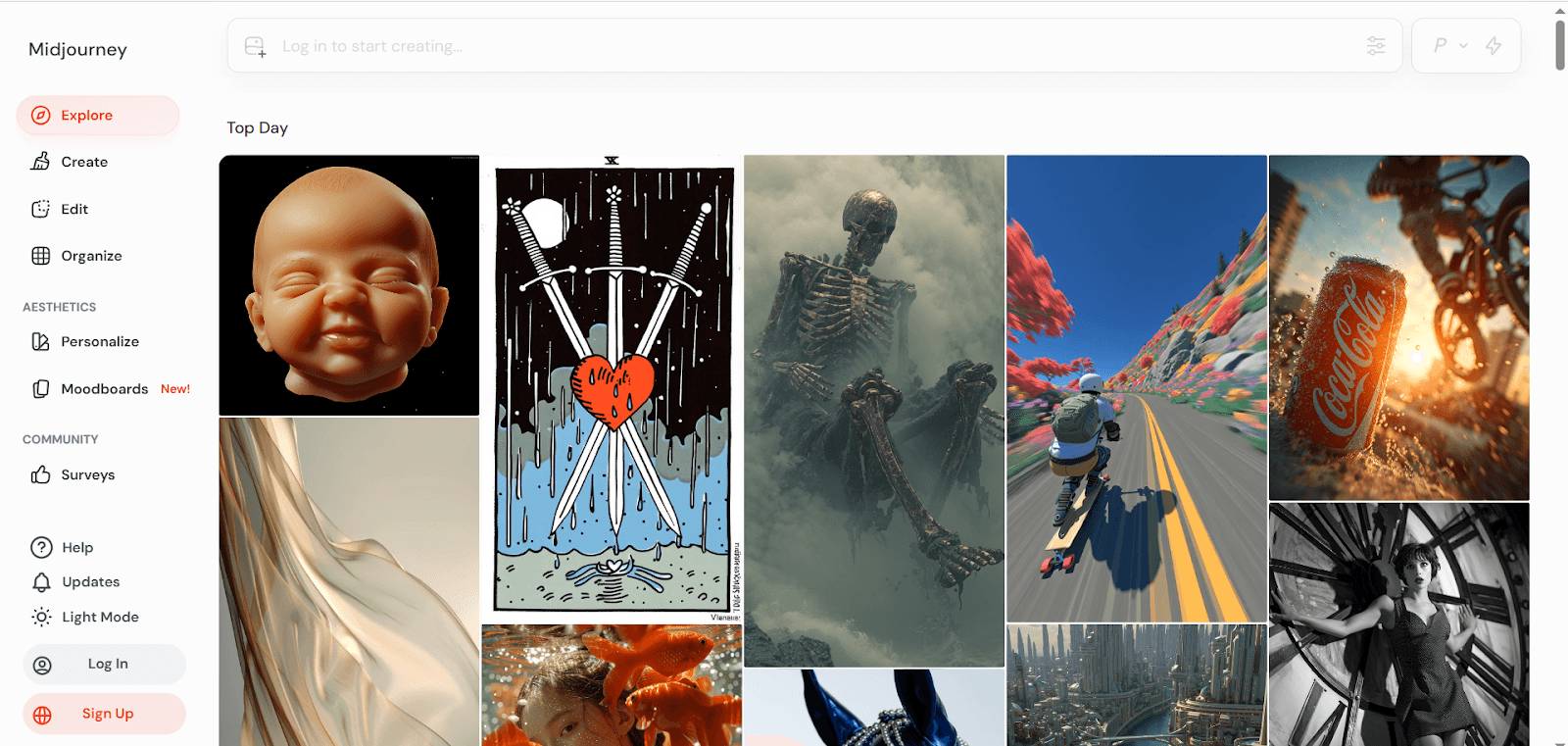

Midjourney v6: The Gold Standard for Single-Shot Fashion Images

Let's give Midjourney its due. If you need one extraordinary fashion image, Midjourney v6 is still the most aesthetically sophisticated text-to-image model available.

The way it handles fabric drape, the subtlety of its skin rendering, and its sensitivity to lighting language described in prompts are genuinely impressive. A well-crafted Midjourney prompt in the hands of someone who understands fashion photography vocabulary can produce magazine-quality results.

The limitation is structural, not quality-based.

What Midjourney Does Well

Single hero images with extraordinary visual quality

Interpreting fashion photography aesthetic references with accuracy

Fabric and material rendering in still frames

Creative direction exploration when you are still figuring out the visual language of a campaign

Where Midjourney Breaks Down for Fashion Editorials

The moment you need more than one shot, you are managing seeds, character references, and style references manually. This works if you are a technically fluent Midjourney user. It breaks if you are a designer trying to produce a full lookbook without becoming a prompt engineer.

There is also no workflow. Midjourney gives you an image. What you do with it, how you organise it into a campaign, how you produce variations, how you get it into your content pipeline, all of that is your problem.

And there is no video.

Best for: Creative directors using it as an ideation tool, or single campaign hero shots where one image is the deliverable.

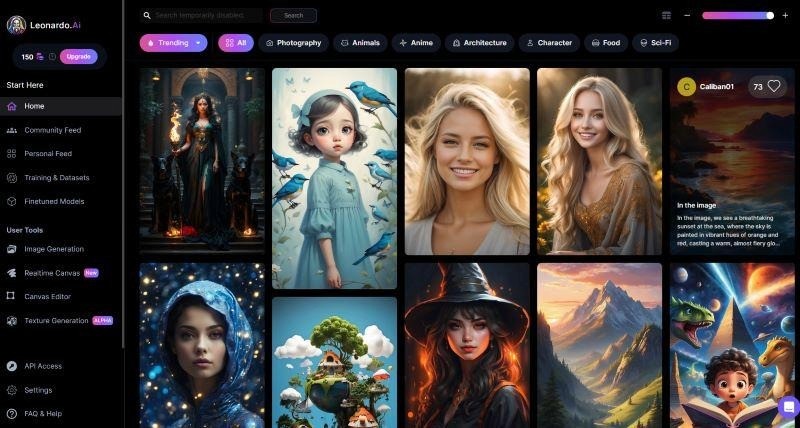

Leonardo AI: Technical Power for Brand-Trained Fashion Output

Leonardo AI takes a different approach. Instead of general-purpose generation, it gives you the tools to train your own model on specific garments, textures, or brand aesthetics.

For a fashion brand that needs extremely precise garment accuracy, such as a specific shoe silhouette, a trademarked print, or a signature textile, Leonardo's LoRA training workflow can produce results that no prompt-only tool can match.

What Leonardo Does Well

Training custom models on specific garments or brand assets

Precise product accuracy when you supply reference images

Fine-grained control for technical fashion product photography

A free tier that lets you experiment before committing

Where Leonardo Has Limits

The training workflow requires time, technical understanding, and a clean set of reference images. If you do not have those assets, or the technical willingness to manage the training process, Leonardo's advantage becomes an obstacle.

Like Midjourney, Leonardo produces images. There is no video output, no integrated campaign workflow, and no way to produce a multi-scene editorial without significant manual work in other tools.

Best for: Fashion brands with a specific product that needs precise visual accuracy across multiple images, and a team member willing to manage the training process.

Which Tool Should You Actually Use?

Stop trying to find the best AI image tool. Find the right one for the specific deliverable you are trying to produce.

If you need this... | Use this tool |

A full editorial shoot: model, garments, lighting, set, multiple angles | Atlabs AI |

A single hero image for a lookbook or campaign poster | Midjourney v6 |

Garment-specific consistency with a trained brand model | Leonardo AI |

A campaign that moves, with video and stills from the same shoot | Atlabs AI |

Content at volume for social, ads, and ecommerce listings | Atlabs AI |

How to Run a Full AI Fashion Editorial in Atlabs: The Actual Workflow

Here is the process a fashion designer or content team would follow to produce a complete editorial campaign using Atlabs.

Define your model (5 minutes)

Write a detailed character description covering face structure, skin tone, hair, and body type. This becomes your locked character definition. Every image in the shoot will reference this.

Set your visual style (5 minutes)

Choose from Atlabs built-in styles or define your own. Minimalist studio. Cinematic outdoor. Editorial gritty. Lock this for the campaign and every image will maintain visual coherence.

Write your shot list (10 minutes)

Map out the images you need. Opening wide shot. Three-quarter detail. Close-up fabric. Alternative colourway. Lifestyle context. Treat this like briefing a real photographer.

Generate and review (20 to 30 minutes)

Generate each shot. Because your character and style are locked, you are only adjusting framing and context per shot. Regenerate individual shots without losing the rest of the campaign.

Extend to video if needed (10 minutes)

Select the stills you want to animate. Atlabs converts them to motion clips using the same visual language. Your Instagram Reel, TikTok ad, and campaign banner all come from the same shoot.

Export and publish

Export as finished assets. Campaign-ready, not just generation outputs.

Total time for a full editorial campaign: under two hours. For a brand that would otherwise spend two days coordinating a studio shoot, that is a material change in how fast you can move.

>>> Start your first Atlabs fashion editorial free

Prompt Engineering Tips for AI Fashion Photography

Regardless of which tool you use, these principles improve your results significantly.

Describe the garment like you are briefing a seamstress

Do not write 'black dress.' Write 'structured black crepe midi dress, bateau neckline, three-quarter sleeves, clean unstructured silhouette, no visible seams, matte finish fabric.' The more specific your garment description, the more accurate and usable the output.

Specify your lighting setup like a photographer

Do not write 'good lighting.' Write 'soft box key light from camera left at 45 degrees, white reflector fill from camera right, no rim light, clean shadow on white cyclorama background.' Lighting language that a photographer would use produces outputs that look like photographs.

Anchor the aesthetic with brand references

Adding a brand visual reference "Bottega Veneta quiet luxury visual language" or "Stussy streetwear campaign aesthetic" anchors the model to a specific design vocabulary without requiring you to describe every element. Use this carefully: the reference should be a guide, not a constraint.

Use negative constraints explicitly

Adding 'no filters, no heavy retouching, no artificial skin smoothing, no studio backdrop patterns, no accessories not described' reduces the model's tendency to invent details you did not ask for. This is especially important for garment accuracy.

Lock your camera language

Specify lens length, framing, and camera distance. "85mm equivalent lens, medium close-up, eye-level camera angle" produces very different results than "wide angle, low angle, full body." Fashion photography has a camera grammar. Use it.

Who This Comparison Is Really For

This is not a comparison for AI hobbyists. It is for people who have a real creative or commercial problem and need to know which tool actually solves it.

Independent fashion designers

You are producing a seasonal lookbook without the budget for a studio shoot. Atlabs lets you produce a full editorial campaign with a consistent model and multiple angles for a fraction of the cost.

Social content teams

You are producing weekly content for a fashion brand's Instagram and TikTok. Atlabs gives you stills and video from the same workflow, so a single shoot produces content across every format.

Creative directors in ideation

You are pitching a campaign concept and need reference-quality images before the budget is approved. Midjourney's single-shot quality makes it the fastest way to communicate a visual direction to a client.

E-commerce fashion brands

You are listing 200 products and need product-on-model images without photographing each one. Leonardo's training workflow gives you precise garment accuracy if you have the reference images and the time to train.

The Verdict

Midjourney produces the best single image. Leonardo produces the most precise product accuracy when trained. Atlabs produces the best editorial campaign.

If your deliverable is one image, use Midjourney.

If your deliverable is a campaign, a lookbook, a content calendar, or anything that requires more than one shot of the same model in the same world, use Atlabs.

The era of AI fashion photography that looks like a collection of lucky generations is ending. The designers and content teams winning right now are the ones treating AI like a production platform, not an image lottery.

Atlabs is that platform. Start your free trial at atlabs.ai.