If you have spent any time trying to make a music video on OpenArt, you already know where things fall apart. The image quality can be great. But the moment you try to build an actual video, you run into walls: no dedicated music workflow, no lyric-driven lip sync, inconsistent characters from one scene to the next, and no timeline to assemble the story. You end up spending more time stitching together clips in outside tools than you spent on the creative work. This is not a knock on OpenArt as an image platform. It is a solid tool. But for a music video, you need more.

This guide puts five platforms side by side: Pictory, Neural Frames, VidMuse, Atlabs.ai, and OpenArt. All five of them have been tested against what music video creators actually need in 2026: audio sync, character consistency, storyboarding, model quality, output control, and pricing. Every fact in this guide comes directly from the platforms' own websites.

What You Actually Need for a Music Video (and Where OpenArt Falls Short)

A music video is not a slideshow. It is a synchronized, narrative-driven production that needs to hold someone's attention for three to five minutes. That means you need a tool that does more than generate pretty images. Here is the baseline any serious music video tool needs to meet:

Audio-driven sync: the visuals should respond to or be timed to your actual track

Lyric or script input so the AI understands what the song is actually about

Character consistency across scenes so your artist looks the same from shot to shot

Scene-level control to fix individual shots without regenerating the whole video

A storyboard or planning layer before you spend credits on generation

Export quality suitable for YouTube, Instagram, and Spotify Canvas

OpenArt checks none of these for music video production. Its lip sync is locked to its own LipSync engine rather than the premium models like Kling or Omnihuman. It has no dedicated music video workflow, no lyric input, and no storyboard creator. You get image generation tools and a basic video layer that was clearly built for general content, not audio-driven storytelling.

OpenArt is a strong image and art generation platform. For music videos specifically, you need a tool that was built with audio at the center. None of the alternatives below require you to compromise on that.

Side-by-Side Comparison: All 5 Tools

Here is how each platform stacks up on the features that matter most for music video creation:

Feature | Atlabs.ai | Neural Frames | VidMuse | Pictory | OpenArt | |

Dedicated MV Workflow | Yes, purpose-built | Yes, music-first | Yes, AI agent | No | No | |

Audio Sync / Beat Detection | Yes, audio-to-video | Yes, 8-stem analysis | Yes, rhythm analysis | No | Limited | |

Lip Sync | Kling, Omnihuman, Runway Act-Two | Not a core feature | Kling Avatar, Omnihuman | No | Own engine only | |

Character Consistency | Yes, across all scenes | Yes, custom avatars | Yes, reference images | No | Limited | |

Storyboard Creator | Yes, drag-and-drop | Yes, AI storyboard | Yes, AI-planned | No | No | |

AI Models Access | Kling, Veo, Runway, Flux, Sora, Hailou, more | Kling, Seedance, Runway, Stable Diffusion | Kling, Veo, Sora, Seedance, Midjourney | Stock footage only | Multiple image models | |

Video Length | Up to 15 min (Max) | Up to 10 min | Full song length | Up to 20 min (text-based) | Short clips only | |

Export Quality | 4K (Max/Enterprise) | 4K (Ninja+) | 1080p | 1080p | Up to 1080p | |

Free Tier | Yes (20 credits/mo) | Yes (limited) | Yes (1,000 credits) | Free trial only | Yes | |

Starting Paid Price | $15/mo (Lite) | $26/mo (Knight, annual) | $39/mo (Pro) | $25/mo (Starter, annual) | $29/mo (Advanced) | |

Full Breakdown: Each Tool Reviewed for Music Videos

1. Atlabs.ai: The Most Complete Music Video Workflow

Atlabs.ai is the only platform on this list that has a dedicated music video workflow at atlabs.ai/new-music. Everything else on this list is either a general video tool that musicians happen to use, or a specialist tool with a narrower feature scope. Atlabs is built for full production from start to export.

How the workflow actually works: You start with the storyboard creator, where you plan each scene visually before generating a single second of video. Then you generate with your chosen model. Characters stay consistent across every shot. Lip sync ties your character's performance to your actual audio. When a scene does not land, you regenerate just that scene without touching the rest. You export directly to MP4 for YouTube, Instagram, TikTok, or Spotify Canvas.

What sets Atlabs apart from every other tool here:

Access to 100 plus AI models including Google Veo 3.1, Kling 2.1 Master, Sora 2, Runway, Hailou 2.3, Seedance, Flux Kontext, Nano Banana Pro, GPT Image, and more. All inside one platform. No separate subscriptions.

Lip sync through multiple engines: Kling Lip Sync, Sync 2.0, Omnihuman 1.5, Kling AI Avatar, Runway Act-Two, and Wan 2.2 Animate Pro. You choose the engine that fits the shot.

Audio to AI Video workflow specifically for music, where your track drives the visual generation

Expression Transfer, Voice Mirroring, and Face Expression Transfer for performance-level character work

40 plus language support with synced voiceovers, so your music video travels globally with one click

Export to Adobe Premiere Pro on Plus and higher plans for post-production polish

Custom character training so your recurring artist or band member stays recognizable across every release

3x faster renders on Max plan with priority support

Pricing (verified from atlabs.ai/pricing):

Free: 20 credits per month, watermarked export, limited models

Lite: $15/month, 1,800 credits/year, up to 2-minute videos, 20 plus visual styles, 40 plus language support

Pro: $29/month, 4,200 credits/year, AI motion videos, character casting, lip sync, 100 plus AI models including Veo 3.1 and Kling, 1080p export, up to 5-minute videos

Plus: $59/month, 9,000 credits/year, train your own models, audio to AI video, expression transfer, voice mirroring, up to 10-minute videos, Premiere Pro export

Max: $189/month, 31,200 credits/year, unlimited custom actors, 4K export, 3x faster renders, up to 15-minute videos

Enterprise: Custom pricing, unlimited 4K, custom AI models, screenplay to video, SSO

Who Atlabs is right for: Independent musicians who want to release a proper video, not just a clip. Brands using music in ads. Filmmakers building music-driven narratives. Label teams producing across multiple artists. Anyone who has already tried stitching clips together in other tools and wants the whole production in one place.

Build your music video on Atlabs: Try Atlabs Free Today

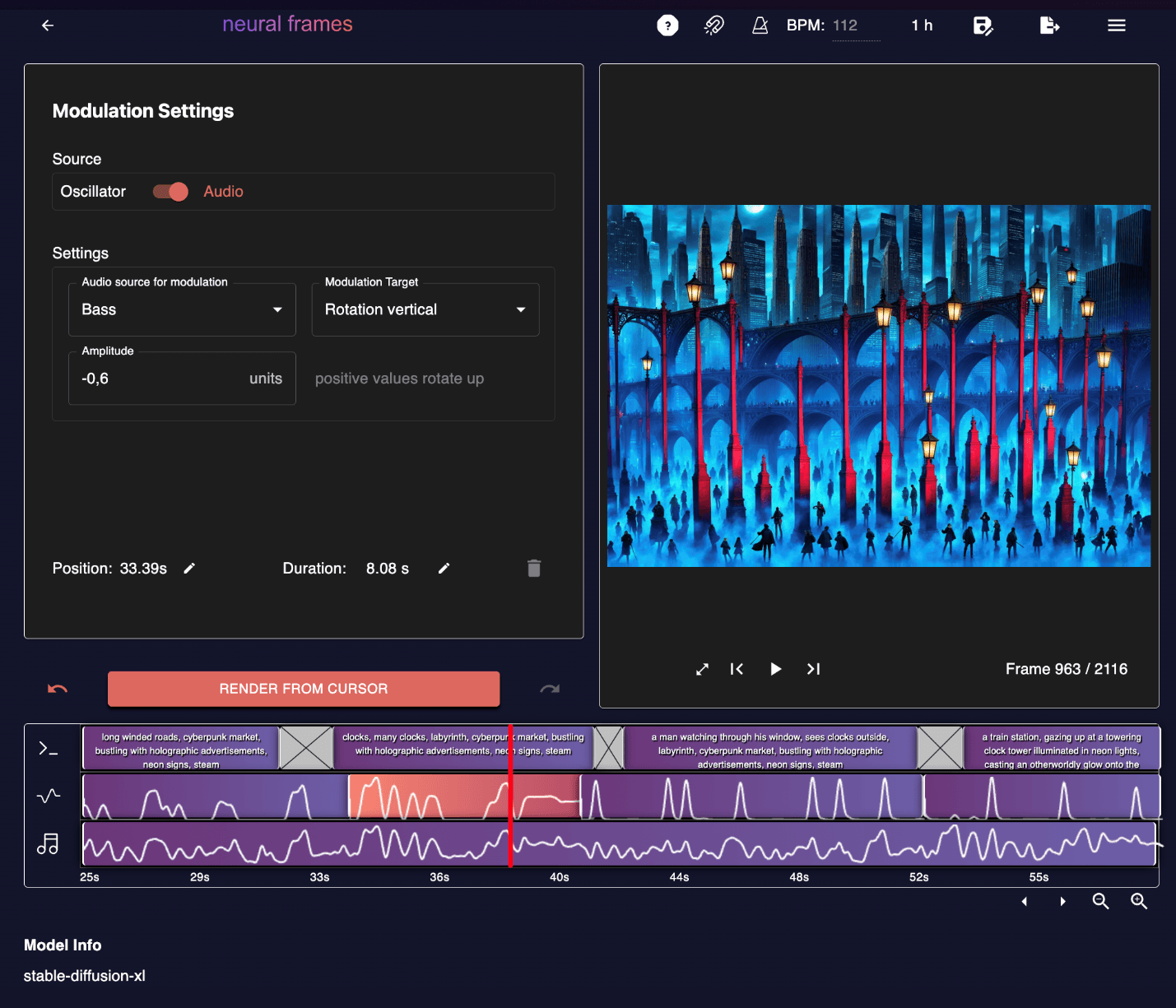

2. Neural Frames: Best for Audio-Reactive Visuals and Visualizers

Neural Frames is the closest thing to a pure music specialist on this list. The platform was built by musicians for musicians, and that shows in every feature decision. Where most tools add music as an afterthought, Neural Frames starts with your audio and builds everything from there.

The Autopilot feature is the fastest path to a music video on any tool reviewed here. You upload your track, the AI analyzes lyrics, BPM, mood, key, and emotional progression, generates a full storyboard, and renders a complete video in around ten minutes. For artists who release frequently and need visuals for every track, Autopilot is genuinely transformative.

Core features:

8-stem audio analysis: each stem (kick, snare, bass, vocals, keys, etc.) can control a separate visual parameter. Colors pulse with the bass. Transitions follow the drums. Effects respond to vocals. This is audio-reactive animation in the truest sense.

Frame-by-frame editor built like a DAW, with a timeline interface and modulation parameters for precise control

Automatic BPM detection, key recognition, mood analysis, and lyric extraction

Custom model training: upload your art style or your own face to create a signature visual identity

Access to Kling, Seedance, Runway, and Stable Diffusion inside one platform

4K upscaling on Neural Ninja and Neural Nirvana plans, no extra charge

Lyric video maker with automatic text-visual sync

Platform-optimized exports for YouTube, Instagram Reels, TikTok, and Spotify Canvas

Full commercial rights on all paid plans. Credits roll over.

Where Neural Frames is strong: Abstract, artistic, audio-reactive visuals. Trippy and experimental aesthetics. Visualizers and Spotify Canvas content. Artists who want to develop a signature visual style and maintain it across a whole album or discography. The frame-by-frame control gives experienced creators a level of precision that no other tool here matches.

Where it falls short for music videos: Neural Frames is exceptional at audio-reactive animation but does not have the same lip sync engine quality as Atlabs or VidMuse for character performance videos. If your music video concept involves a character who looks and moves like a real performer, Neural Frames is better suited for stylized or abstract visuals than realistic performance footage. There is no built-in voiceover, background music library, or 40-language translation.

Pricing (verified from neuralframes.com/pricing, annual billing):

Neural Knight: $26/month, 2,400 credits, 7 AI models, stem extraction, audioreactive effects, 1080p upscaling. 4K not included. Not recommended for Autopilot.

Neural Ninja: $66/month, 7,200 credits, 10 AI models, stem extraction, audioreactive effects, 1080p and 4K upscaling. Recommended for Autopilot.

Neural Nirvana: $199/month, 24,000 credits, 10 AI models, priority upscaling, best for Autopilot.

Free tier available with limited generation.

30-day money-back guarantee if fewer than 30 credits used.

2. Neural Frames: Best for Audio-Reactive Visuals and Visualizers

Neural Frames is the closest thing to a pure music specialist on this list. The platform was built by musicians for musicians, and that shows in every feature decision. Where most tools add music as an afterthought, Neural Frames starts with your audio and builds everything from there.

The Autopilot feature is the fastest path to a music video on any tool reviewed here. You upload your track, the AI analyzes lyrics, BPM, mood, key, and emotional progression, generates a full storyboard, and renders a complete video in around ten minutes. For artists who release frequently and need visuals for every track, Autopilot is genuinely transformative.

Core features:

8-stem audio analysis: each stem (kick, snare, bass, vocals, keys, etc.) can control a separate visual parameter. Colors pulse with the bass. Transitions follow the drums. Effects respond to vocals. This is audio-reactive animation in the truest sense.

Frame-by-frame editor built like a DAW, with a timeline interface and modulation parameters for precise control

Automatic BPM detection, key recognition, mood analysis, and lyric extraction

Custom model training: upload your art style or your own face to create a signature visual identity

Access to Kling, Seedance, Runway, and Stable Diffusion inside one platform

4K upscaling on Neural Ninja and Neural Nirvana plans, no extra charge

Lyric video maker with automatic text-visual sync

Platform-optimized exports for YouTube, Instagram Reels, TikTok, and Spotify Canvas

Full commercial rights on all paid plans. Credits roll over.

Where Neural Frames is strong: Abstract, artistic, audio-reactive visuals. Trippy and experimental aesthetics. Visualizers and Spotify Canvas content. Artists who want to develop a signature visual style and maintain it across a whole album or discography. The frame-by-frame control gives experienced creators a level of precision that no other tool here matches.

Where it falls short for music videos: Neural Frames is exceptional at audio-reactive animation but does not have the same lip sync engine quality as Atlabs or VidMuse for character performance videos. If your music video concept involves a character who looks and moves like a real performer, Neural Frames is better suited for stylized or abstract visuals than realistic performance footage. There is no built-in voiceover, background music library, or 40-language translation.

Pricing (verified from neuralframes.com/pricing, annual billing):

Neural Knight: $26/month, 2,400 credits, 7 AI models, stem extraction, audioreactive effects, 1080p upscaling. 4K not included. Not recommended for Autopilot.

Neural Ninja: $66/month, 7,200 credits, 10 AI models, stem extraction, audioreactive effects, 1080p and 4K upscaling. Recommended for Autopilot.

Neural Nirvana: $199/month, 24,000 credits, 10 AI models, priority upscaling, best for Autopilot.

Free tier available with limited generation.

30-day money-back guarantee if fewer than 30 credits used.

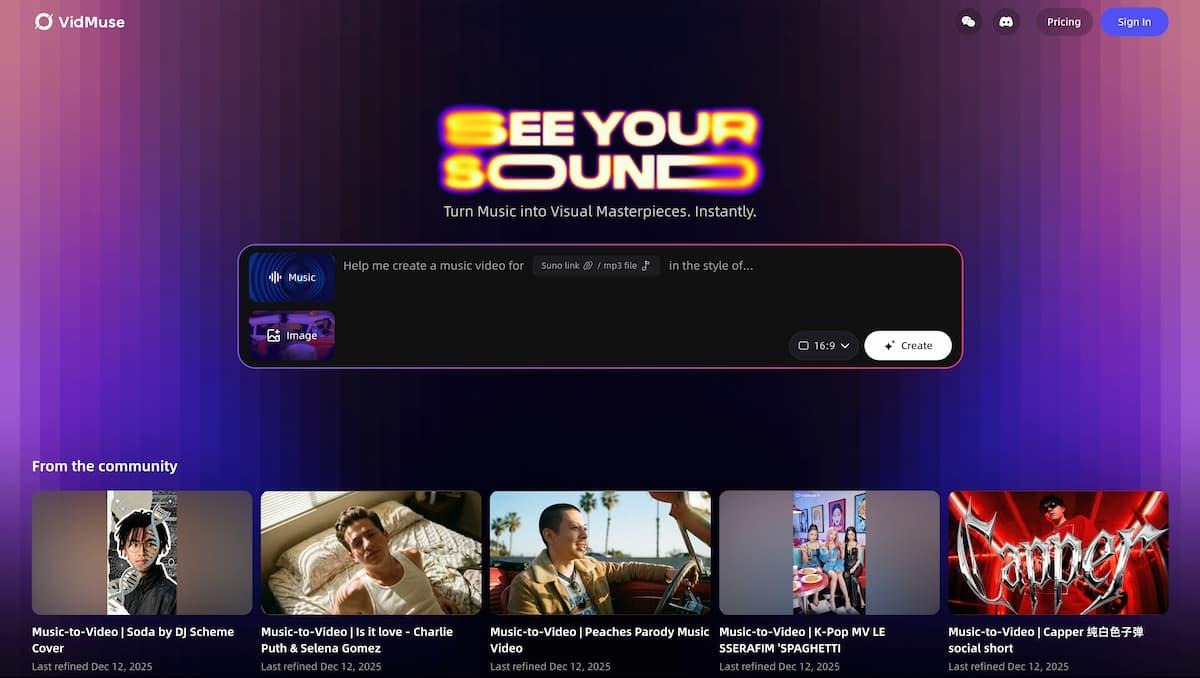

3. VidMuse: Best Conversational Music Video Agent

VidMuse is the newest of the four platforms in this guide, having launched in early 2026. It takes a different approach to music video creation: instead of a traditional interface with buttons and settings, VidMuse operates as a conversational AI agent. You describe your vision in plain language, the agent asks clarifying questions, plans the storyboard, and generates your video as a collaborative process.

How VidMuse works: You upload your track and describe what you want. VidMuse analyzes lyrics, musical segments, rhythm, and emotional progression, then suggests a visual style and storyboard. You refine it through the chat interface. The AI adapts based on your feedback throughout the process. This conversational approach lowers the barrier for creators who find prompt engineering intimidating.

Core features:

Intelligent music analysis: parses lyrics, identifies rhythm phases, reads emotional fluctuations, plans timing and scene transitions around your specific track

Conversational workflow: you guide the production in plain language, no prompting experience needed

Visual consistency engine: upload reference images of your lead actor, costume, or character. VidMuse applies them consistently across the entire project.

Studio Mode and Lite Mode: toggle between high-quality flagship renders and credit-efficient draft mode during iteration

Models include Kling V3.0, Veo 3.1, Sora 2 Pro, Seedance V1.5 Pro, Midjourney V7, Nano Banana Pro, Omnihuman V1.5, ElevenLabs Music, and more on the Pro plan

Export of Project Clip files for import into Adobe Premiere Pro or DaVinci Resolve

Player Mode: review shot timing and rhythm sync before committing credits to full generation

Commercial license on all paid plans

Where VidMuse shines: For artists who know what they want creatively but find AI video tools technically intimidating, the conversational workflow removes friction. The music analysis is genuinely intelligent. Player Mode is a smart credit-saving feature that lets you preview before you render. The model roster on the Pro plan is extensive.

Where it falls short: VidMuse is a newer platform and some quality-of-life gaps show. Customer support has drawn complaints in early reviews, with some users reporting slow response times. The credit system can be hard to predict upfront since different models consume at different rates. There is no built-in storyboard planning interface in the traditional sense because everything runs through chat. If you prefer clicking through a visual builder rather than typing instructions, the workflow may feel indirect.

Pricing (verified from vidmuse.ai/pricing):

Free: 1,000 one-time credits, 720p with watermark, limited model trial, personal use only

Pro: $39/month (or $33/month billed annually), 4,000 credits/month, 1080p, all model access including Kling V3.0, Veo 3.1, Sora 2, Omnihuman, commercial license

Studio: $159/month (or $133/month annually), 18,000 credits/month, all Pro features, 1-on-1 support, commercial license. Covers 10 to 20 polished 1-minute video projects per month.

Add-on credit packs: $30 for 2,400 credits, $60 for 4,800, $100 for 8,000, $200 for 16,000. Valid 12 months from purchase.

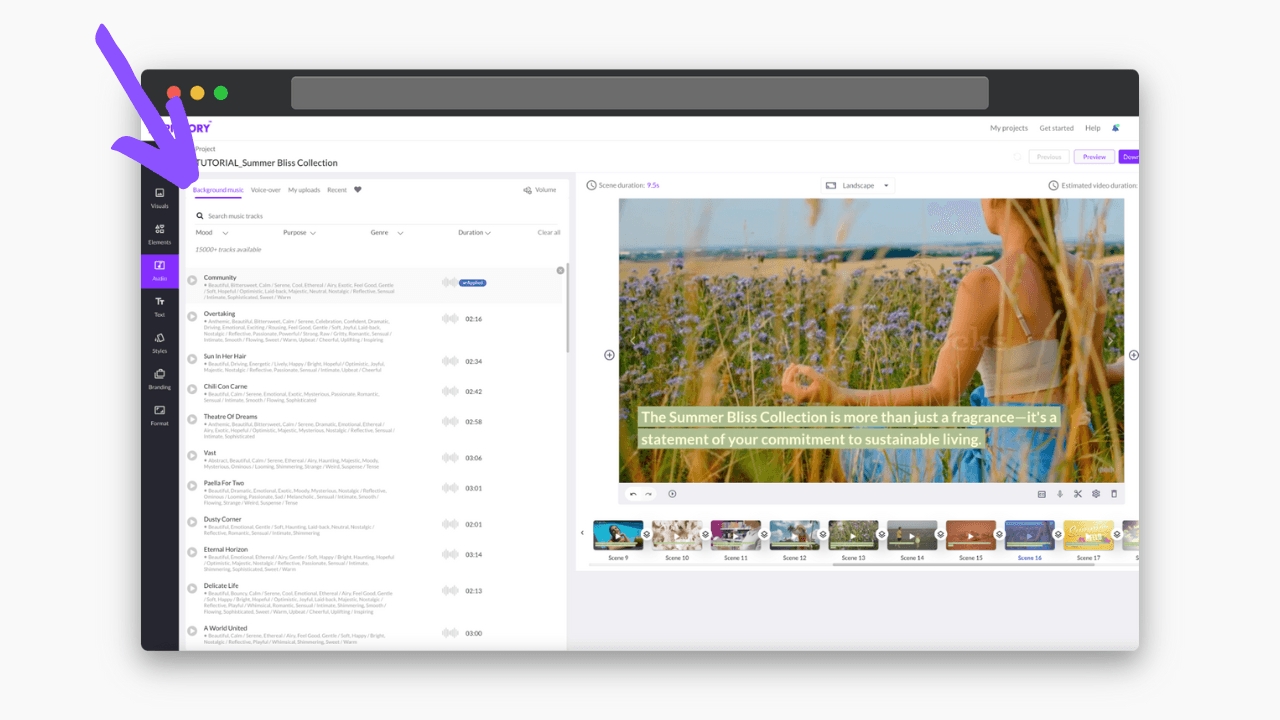

4. Pictory: Best for Script-to-Video with Stock Media

Pictory is the most widely used content repurposing tool in this comparison. It is fast, beginner-friendly, and has one of the largest stock media libraries available through any AI video tool. If your priority is turning a script or existing written content into a video quickly, Pictory does that better than most.

How Pictory works for music video creators: Pictory does not directly sync video to audio. What it does is let you paste a script, automatically match scenes to stock footage, add an AI voiceover, and produce a polished video. If your music video concept is lyric-driven and you want professional stock footage as the visual backdrop rather than AI-generated imagery, Pictory can work. For AI-generated visuals, performance footage, or character-driven stories, it is not the right tool.

Core features:

Script to Video: paste your lyrics or script, AI matches scenes to stock footage from a library of 18 million plus assets from Getty Images and Storyblocks on Professional plans

Article to Video: converts existing written content into a video, useful for promotional content around a release

Text-based video editing: edit the video by editing the transcript. Delete a word, the corresponding clip disappears.

AI voiceovers from ElevenLabs on Professional plans, 51 hyper-realistic voices

Auto captions on all videos

Brand kit: custom colors, fonts, logo

15,000 plus music tracks on Standard plans, 10,000 tracks on Teams plan

20 plus language support for AI voiceovers

URL to Video: feed a blog post URL, Pictory pulls the content and creates a video

Where Pictory works for music: Promotional content around a release. Turning a lyric sheet into a lyric video with stock footage backgrounds. Creating recap or teaser content from existing interview or podcast audio. Fast turnaround when you need something presentable without custom character work.

Where Pictory is the wrong choice: Pictory does not generate AI visuals. It uses stock footage. If you want original AI-generated imagery that matches your song's world, Pictory cannot deliver that. There is no audio-reactive sync, no character consistency engine, no beat detection, and no music video-specific workflow. It is a content marketing tool that musicians can use for some purposes, not a dedicated music video platform.

Pricing (verified, annual billing):

Starter: $25/month annually ($23/month on flexible pricing seen across sources), 200 video minutes/month, 2 million stock assets, 34 AI voices, 5,000 music tracks, 720p

Professional: $35/month annually, 600 video minutes/month, 18 million assets from Getty and Storyblocks, 51 hyper-realistic ElevenLabs voices, 10,000 music tracks, 1080p, video summarization

Teams: $99/month annually, 3 users, 90 videos/month, collaboration features, bulk downloads

Free trial: 3 video projects up to 10 minutes each. No permanent free tier.

Direct Comparison: Atlabs vs OpenArt for Music Videos

Most people reading this already have a reason they are moving on from OpenArt. Here is the concrete feature-by-feature comparison so you can see exactly what changes:

Feature | OpenArt | Atlabs.ai |

Dedicated music video workflow | No | Yes, at atlabs.ai/new-music |

Lyric-driven visual generation | Not supported | Audio to AI Video workflow |

Storyboard creator | Not available | Yes, drag-and-drop scene planner |

Character consistency | Limited, varies by model | Yes, consistent across all scenes |

Lip sync engines | Own LipSync engine only | Kling, Omnihuman, Sync 2.0, Runway Act-Two, and more |

AI model access | Multiple image/video models | 100 plus models: Veo 3.1, Kling 2.1 Master, Sora 2, Runway, Flux, Hailou, Seedance, and more |

Scene-level editing | Limited | Yes, regenerate individual scenes |

Export to Premiere Pro | No | Yes (Plus plan and above) |

Free tier | Yes, credit limits | Yes, 20 credits per month |

Starting paid price | $29/month (Advanced) | $15/month (Lite) |

40 plus language voiceovers | No | Yes |

Train custom character models | Character training available | Yes, on Plus plan and above |

Which Tool Is Right for You?

The right tool depends entirely on what kind of music video you are making and how much of the workflow you want handled for you.

Choose Atlabs.ai if: You want a complete, production-ready music video with consistent characters, proper lip sync, and multi-model AI generation. You want the storyboard planned, the audio synced, and the export formatted for your platform of choice. You want commercial rights and do not want to manage multiple tool subscriptions. Atlabs is the best all-in-one platform for musicians and content creators in 2026 who want results they are actually proud to release.

Choose Neural Frames if: Your music video concept is visual and artistic rather than character-driven. You want audio-reactive animations, trippy visualizers, or a signature aesthetic that responds to the sound of your track in real time. The frame-by-frame DAW-style editor is unmatched for precision, and the Autopilot feature is the fastest path to a draft on any tool in this guide. This is the platform for musicians who are also visual artists.

Choose VidMuse if: You want to direct your music video through conversation rather than through a visual interface. The AI agent approach works well for creators who find prompt engineering intimidating and prefer to describe what they want in plain language. The model roster is strong and the music analysis is intelligent. Worth watching as the platform matures.

Choose Pictory if: You need fast promotional content around a release, a lyric video with stock footage, or a way to repurpose written content like interviews or press releases into video format. Not suitable for original AI-generated music video visuals or character-driven productions.

Stick with OpenArt if: Your primary need is image generation or short static clips and you do not need a full music video workflow. For everything a proper music video requires, OpenArt is not built for it.

The Verdict: Why Atlabs Is the Right Move for Music Video Creators

Every tool in this guide has something going for it. Neural Frames is genuinely excellent for audio-reactive visuals. VidMuse has a clever conversational approach. Pictory is fast and beginner-friendly for stock-based content. None of them do what Atlabs does: bring the full music video production pipeline into one place, built around your audio from the first scene to the final export.

The storyboard-first approach means you do not spend credits figuring out what you want. The multi-model engine means you always have access to the best generation quality available. The consistent character system means your artist looks the same in scene 1 and scene 14. The lip sync engines mean your character's performance is tied to your actual track, not a generic animation.

And the pricing is honest. You can start free. If you want the full production toolkit, the Pro plan at $29/month gives you 100 plus AI models, lip sync, character casting, and 1080p export. For the depth of what is included, that is one of the strongest value propositions in AI video right now. See for yourself.

Start making your music video on Atlabs: Try Atlabs Free Today