Most AI Video Ads Look Good. Almost None of Them Perform.

The barrier to producing a visually polished AI video ad is essentially zero. Any brand with access to modern AI tools can generate something that looks intentional, on-brand, and scroll-worthy. That is no longer the differentiator.

What separates a 3% CTR from a 0.4% CTR is not production quality. It is structure. The ads that consistently perform on Facebook, TikTok, and Instagram Reels follow a repeatable anatomy. They are engineered, not generated.

This post breaks down that anatomy and shows exactly how Atlabs is built to execute it.

The Shift to AI Video Ads

Traditional ad production operates on a fixed cost model. A single video shoot requires casting, location fees, production crew, post-production, and revision cycles that take weeks. For most brands, this means running three to five creatives per quarter.

AI-native workflows break that constraint entirely.

Speed is the first lever. What previously required a two-week production cycle can be output in hours. Motion, character consistency, scene variation, voiceover all controllable within a single platform session.

Scalability is the second. A brand running AI video workflows can test 30 creative variants in the time it would have taken to produce one. That volume unlocks real performance data instead of directional assumptions.

Cost compression is the third. AI video production does not eliminate the need for creative strategy, but it removes the hard floor on execution costs. Iteration is no longer expensive.

Atlabs is built specifically for this model, a production system where speed, scene modularity, and output consistency are native to the workflow, not bolted on afterward.

The Atlabs Workflow: Step by Step

Atlabs is an AI creative suite, not just a clip generation tool. Every step below is a native workflow stage with no third-party tools, no manual reformatting.

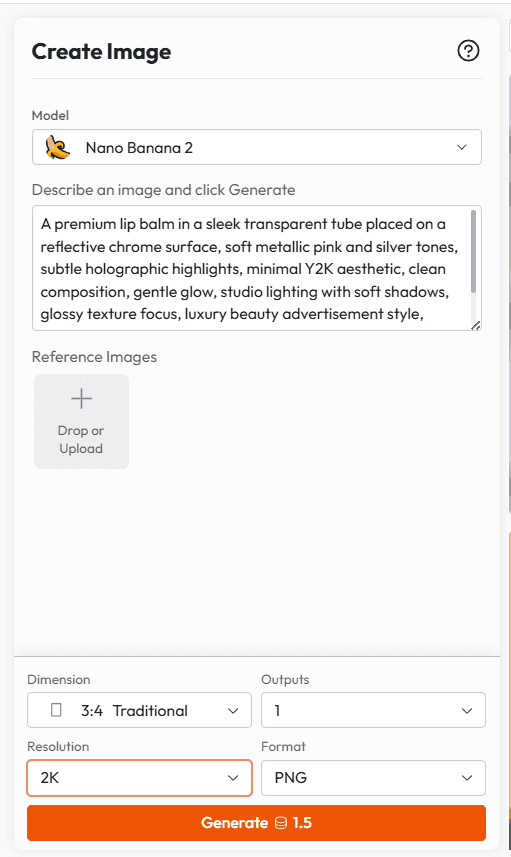

STEP 1 | Lock the Visual Foundation Generate a static image to validate your visual direction before committing to motion. Define subject, lighting, environment, color temperature, and composition at this stage. Iteration here is fast and cheap. Atlabs feature: Atlabs stills generation supports full prompt parameterization lighting type, environment detail, subject position, color temperature with instant preview. |

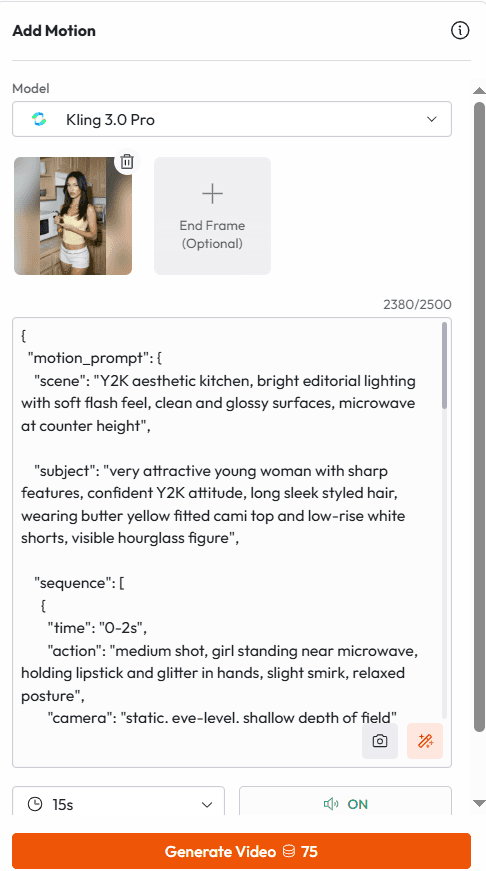

STEP 2 | Motion Prompt: Define the Kinetic Layer Apply a motion prompt that specifies camera movement, subject motion, and timing with precision. Reference specific durations ('2-second slow push-in') rather than directional descriptions ('zoom in'). Precision here controls output quality directly.

|

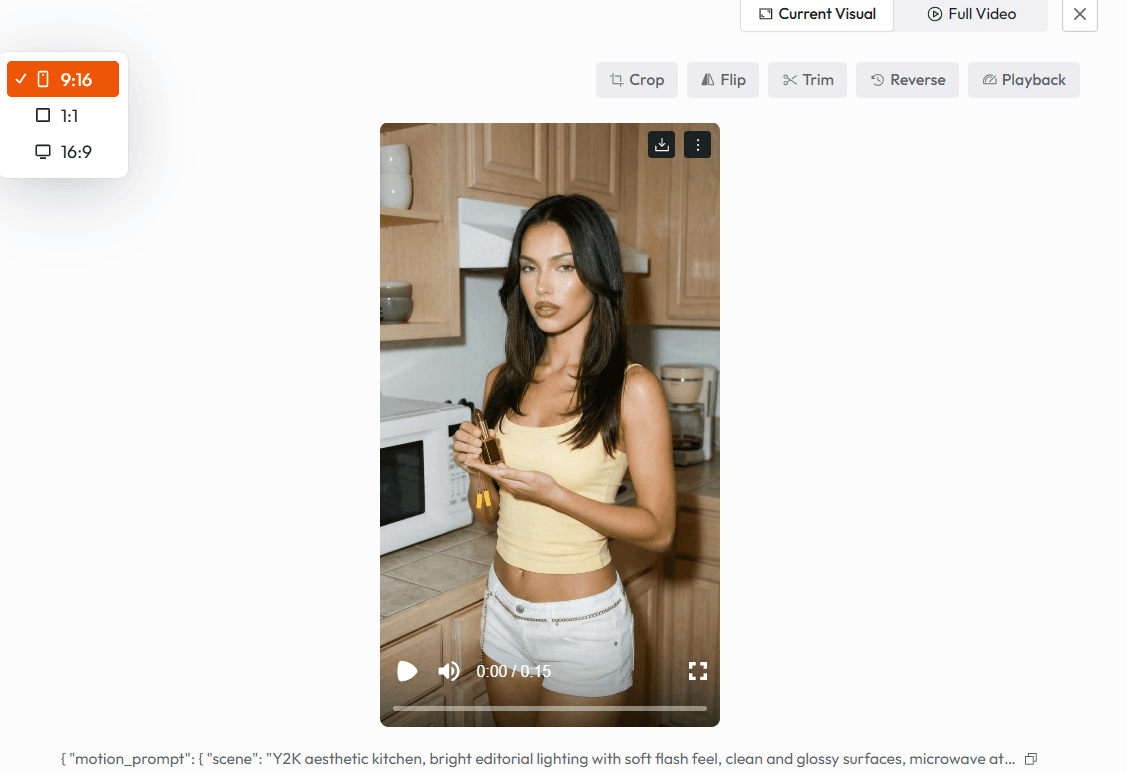

STEP 3 | Output Formats and Optimization Apply platform-specific specifications: aspect ratio, file size, caption placement, audio normalization. No third-party compression or reformatting required.

|

See It in Action

Watch the full Atlabs workflow in action below, a real-time walkthrough from brief to final export, applied to a live ad concept.

What Top-Performing Ads Have in Common

01 | The Hook: First Frame as a Creative Statement |

On TikTok, the average decision window is 1.2 seconds. On Facebook and Instagram, slightly longer but the principle is identical: if the first frame doesn't create an involuntary pause, the ad is invisible. Top-performing hooks use high contrast, a clear subject, and motion that implies continuation. The viewer should feel that stopping the scroll costs them something. |

02 | Pacing: Modulated, Not Flat |

Pacing is where most AI-generated ads fail. Default video model output is visually smooth but narratively flat. High-performing ads modulate pacing deliberately: fast cuts during the problem statement, slower motion during the product reveal, a brief hold on the CTA. This is not automatic; it requires intentional scene-by-scene construction. |

03 | Realism and Texture: UGC-Native Visual Language |

Hyper-polished visuals underperform on both platforms. The algorithm rewards content that reads as native to the feed, slight imperfections, natural lighting, handheld motion artifacts, environments that look inhabited rather than staged. The UGC aesthetic is a trust signal, not a trend. AI video that replicates this texture converts at higher rates than content that looks like a brand asset. |

04 | Emotional Pull: Consistent Register Across All Scenes |

Performance data consistently shows that ads anchored in a specific emotional state not generic positivity outperform neutral creative. The emotional register must be established in the first three seconds and sustained through the CTA. Tonal inconsistency is the single most common structural failure in AI-generated ad content. |

05 | Clarity of Outcome: One Unambiguous CTA |

The viewer needs to understand, without effort, what happens after they click. Ambiguity in the CTA frame whether visual, textual, or both directly suppresses conversion. Top-performing ads close with a single outcome statement. Not a product feature. Not a tagline. What the viewer gets. |

The Atlabs Ad Anatomy at a Glance

Trait | Why It Matters | Atlabs Capability |

|---|---|---|

Hook | Stops the scroll in 1.2s | Multi-variant hook generation from single brief |

Pacing | Controls narrative rhythm | Scene-level motion & timing parameters |

Realism | Builds feed-native trust | Lighting, texture & imperfection controls |

Emotional Pull | Sustains viewer engagement | Cross-scene tonal consistency engine |

CTA Clarity | Drives post-click action | Platform-formatted CTA scene with safe zones |

Why Brands Are Shifting to This

Performance data is the primary driver. Brands that have moved significant ad spend into AI-native creative workflows are seeing improvements in CTR and ROAS not because the visuals are better, but because they are running more tests and finding winning creatives faster.

Speed compounds. A team that ships 20 creative variants per week instead of 4 does not just find winners faster. It builds structural knowledge of what resonates with its audience.

UGC-style realism is increasingly difficult to source at scale through traditional means. AI workflows that replicate the texture and pacing of organic content give brands access to a creative register that previously required large influencer networks.

How to Transition to AI Video Ad Workflows

Transitioning from traditional to AI-native ad production is not a tool swap. It is a workflow redesign. The following steps provide a practical path.

1. Audit your existing top performers

Identify the structural patterns in your best-performing creatives. Hook format, pacing pattern, emotional register. These become your Atlabs brief foundation.

2. Define a modular scene structure

Stop thinking in terms of single videos. Think in scenes: Hook (0-3s), Problem (3-8s), Solution (8-18s), CTA (18-25s). In Atlabs, each scene is a discrete generation until swap hooks without rebuilding everything.

3. Generate stills before motion

Always begin with Atlabs still generation to validate your visual direction. Lock the frame composition, lighting, and subject placement before committing to motion. This single step eliminates the largest source of iteration waste.

4. Iterate on hooks first

Generate five to eight hook variants for a single concept. Run hook tests with minimal spend before producing the full ad. Atlabs makes this economical; each variant takes minutes, not days.

5. Set platform output specs upfront

TikTok and Facebook have different aspect ratios, caption placements, and feed behaviors. In Atlabs, you set platform specs at the project level and outputs are formatted correctly from the start.

Example Prompts

The following prompts are production-ready starting points built for use in Atlabs. Specificity drives output quality vague prompts produce generic results.

PROMPT 01: IMAGE GENERATION (ATLABS STILL PROMPT)

Skincare brand. Women 28-42. UGC lifestyle product shot.

|

|

|

|

|

|

PROMPT 02: MOTION GENERATION (ATLABS MOTION PROMPT)

Fitness supplement. TikTok hook scene. 3 seconds.

|

|

|

|

|

|

PROMPT 03: PRODUCT REVEAL SCENE (ATLABS SCENE CONTROL)

DTC food brand. Mid-sequence product reveal. Facebook feed.

|

|

|

|

|

|

The Impact of This Approach

Teams using the Atlabs workflow do not just produce content faster. They build a different kind of creative intelligence, one that compounds with every testing cycle.

Iteration velocity is the most immediate impact. A brand running Atlabs.ai can go from brief to tested creative in under 48 hours. That compression changes the economics of performance marketing entirely.

Creative quality improves with volume, not despite it. When iteration is cheap and modular, teams explore more directions, fail faster, and converge on high-performance assets with more data behind them.

Scalable performance is the compound outcome. A library of modular, platform-ready scene assets that can be recombined, refreshed, and tested continuously is a durable creative infrastructure. It does not degrade over time the way a single hero video does.

Atlabs Brands using Atlabs as a full production system, not just a generation tool report shorter time-to-winner, higher creative throughput, and a structural reduction in cost-per-tested-creative.